- Sep 24, 2025

EU AI Act: A New Standard for Trust, Safety, and Policy in the Age of Artificial Intelligence

- Apr 26, 2026

- Exclusive

Artificial Intelligence (AI) is no longer a concept of the future—it is now a reality in our everyday lives. Whether we are watching movies on Netflix, using spam filters in email, or tracking fitness through apps, AI is quietly working behind the scenes. However, as this technology grows more powerful, concerns about its risks, misuse, and impact on human rights are also increasing.

In this context, the EU AI Act has been introduced by the European Union—the world’s first comprehensive legal framework designed to define the boundaries and responsibilities of AI usage. Its core philosophy is clear: AI should serve humanity, not work against it.

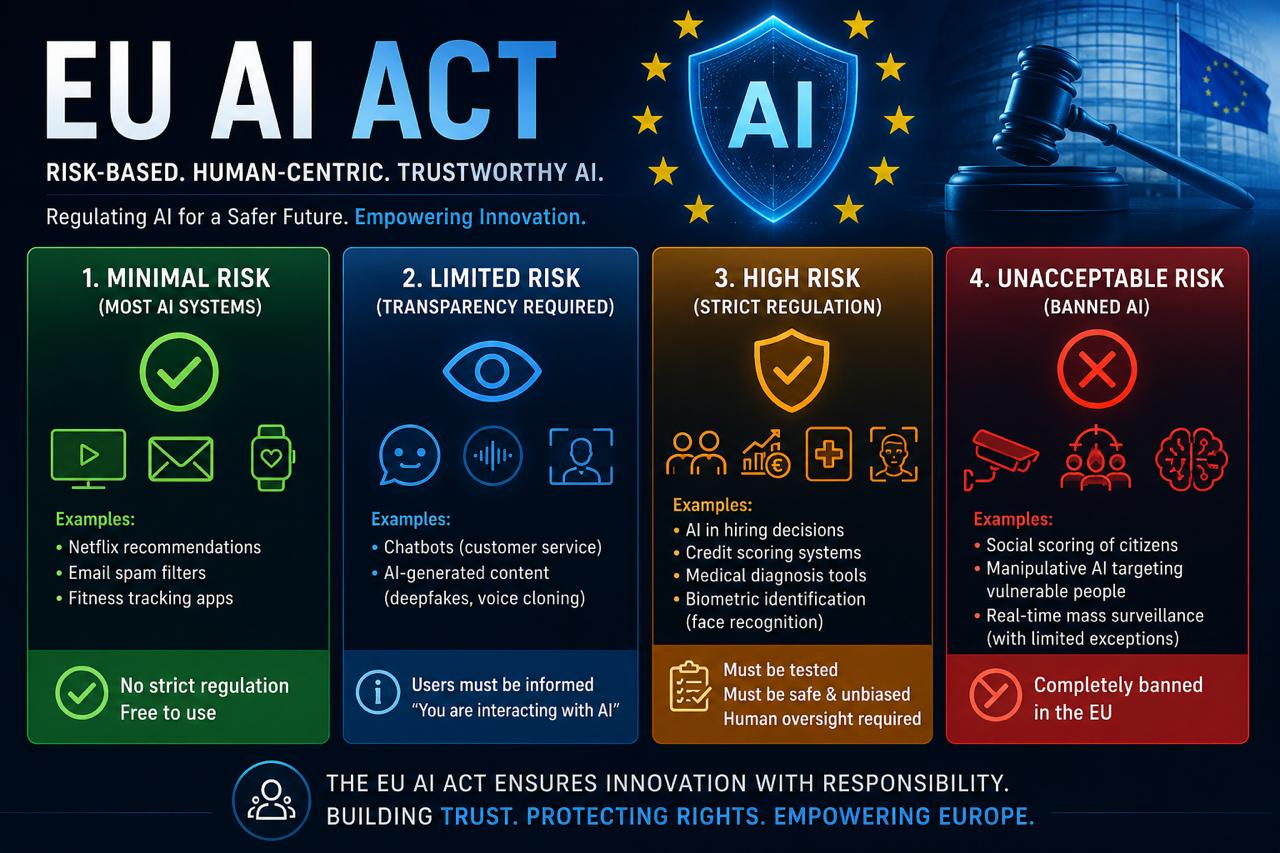

One of the most important aspects of the EU AI Act is its risk-based classification system. Instead of treating all AI systems equally, it categorizes them into four levels based on risk.

The first level is Minimal Risk, which includes most common AI applications such as recommendation systems and spam filters. These systems face minimal regulation because they pose very low risk.

The second level is Limited Risk, where transparency becomes essential. This includes chatbots or AI-generated content. Users must be informed when they are interacting with AI. This transparency forms the foundation of trust.

The third level is High Risk, covering the most critical and sensitive areas. AI used in job recruitment, bank credit scoring, or medical diagnosis falls into this category. Errors in these systems can significantly impact people’s lives. Therefore, strict requirements such as mandatory testing, safety assurance, and human oversight are essential.

The fourth level is Unacceptable Risk, which includes AI systems that pose a direct threat to human rights—such as social scoring or technologies that covertly manipulate human behavior. These are outright banned within the EU.

Why is this globally significant?

This law is not limited to Europe. In reality, any company that wants to serve users in Europe must comply with it. As a result, the EU AI Act is gradually becoming a global standard.

This is not a law to stop technology—it is an effort to make technology responsible. It seeks to create a balance between innovation and human rights.

The Future of AI: Control or Freedom?

The question is no longer whether AI will exist—it certainly will. The real question is how it will exist.

Unregulated AI can be dangerous, but excessive regulation may hinder innovation. The EU AI Act aims to present a practical middle path—where technology progresses while human rights and dignity remain protected.

AI will shape our future—this is now certain. But how fair, safe, and humane that future will be depends on the decisions we make today.

The EU AI Act represents a significant step in that direction—a future where AI is not only powerful, but also trustworthy.

Author Bio:

Khandker Shamim

Lawyer, engineer, and human rights activist.

He is currently working at the intersection of management, policy research, and technology and law. He is the founder of PATH Bangladesh and is actively engaged in promoting public interest, justice, and inclusive development.